Webhook Idempotency: Why It Matters and How to Implement It

A comprehensive technical guide to implementing idempotency for webhooks. Learn about idempotency keys, deduplication strategies, and implementation patterns with Node.js and Python code examples.

Webhook Idempotency: Why It Matters and How to Implement It

Your webhook endpoint processes a payment, then the same payment again—now your customer is charged twice. Idempotency prevents this scenario. It's critical for webhook architecture yet often overlooked until failures occur.

What Is Idempotency?

An operation is idempotent when performing it multiple times produces the same result as doing it once. For webhooks: processing the same event twice should have exactly the same effect as processing it once.

Examples:

Idempotent operations:

- Setting a user's email to "user@example.com" (doing it twice still results in the same email)

- Marking an order as "shipped" (it's still shipped after multiple updates)

- Recording a payment with a specific transaction ID (the record already exists)

Non-idempotent operations:

- Incrementing an account balance by $50 (each call adds another $50)

- Sending a notification email (each call sends another email)

- Creating a new order record (each call creates a duplicate)

The distinction matters because webhooks are delivered with "at-least-once" semantics, not "exactly-once."

Why Webhooks Need Idempotency

Webhook providers guarantee delivery with a caveat: they may deliver the same event multiple times due to:

Network uncertainty: Response gets lost so sender retries. Timeout handling: Slow responses trigger retries despite success. Infrastructure retries: Load balancers/CDNs retry automatically. Exponential backoff: Recovery during retries delivers both original and retry.

Studies show 2-5% of deliveries retry. Your code must handle duplicates gracefully.

Real damage from missing idempotency:

- Double-charging customers, duplicate refunds, wrong balances

- Shipping same order twice, inventory errors

- Duplicate accounts, multiple welcome emails

- Discounts applied multiple times, billing errors

These happen daily in systems without proper idempotency.

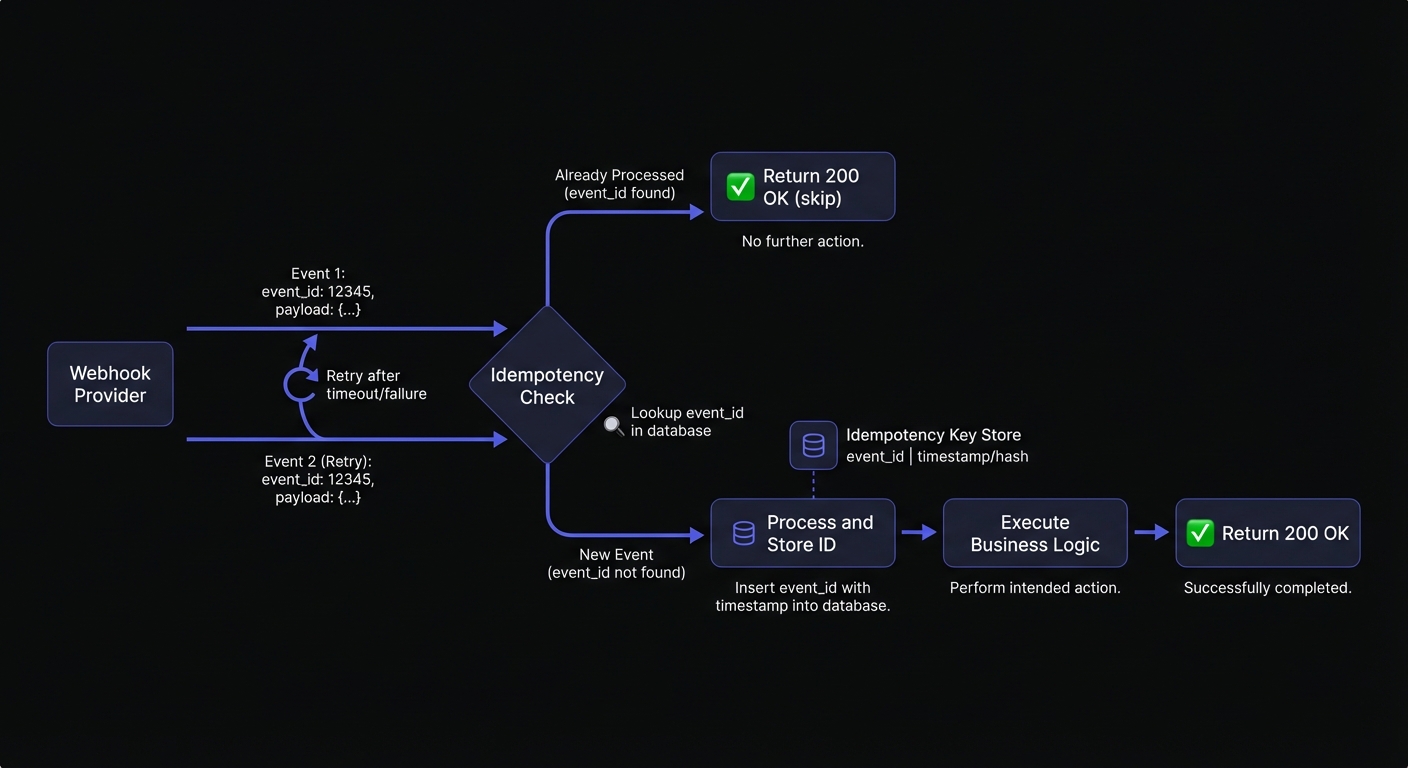

Idempotency Keys: Your First Line of Defense

An idempotency key is a unique identifier that represents a specific event or operation. By tracking which keys you've already processed, you can safely ignore duplicates.

Types of Idempotency Keys

Event IDs: Most webhook providers include a unique event ID in each payload. Stripe uses evt_, GitHub uses delivery GUIDs, and Shopify includes X-Shopify-Webhook-Id headers.

Natural keys: Sometimes the payload itself contains a natural unique identifier—a transaction ID, order number, or combination of fields that uniquely identifies the event.

Computed keys: When no unique identifier exists, you can generate one by hashing the payload contents or combining multiple fields.

Idempotency Key Headers

Hook Mesh includes an X-HookMesh-Idempotency-Key header with every webhook delivery. This key remains consistent across retries, making it trivial to implement deduplication:

app.post('/webhooks', (req, res) => {

const idempotencyKey = req.headers['x-hookmesh-idempotency-key'];

// Use this key for deduplication

if (await hasProcessedKey(idempotencyKey)) {

return res.status(200).json({ status: 'already_processed' });

}

// Process the webhook...

});This works consistently regardless of the original webhook source.

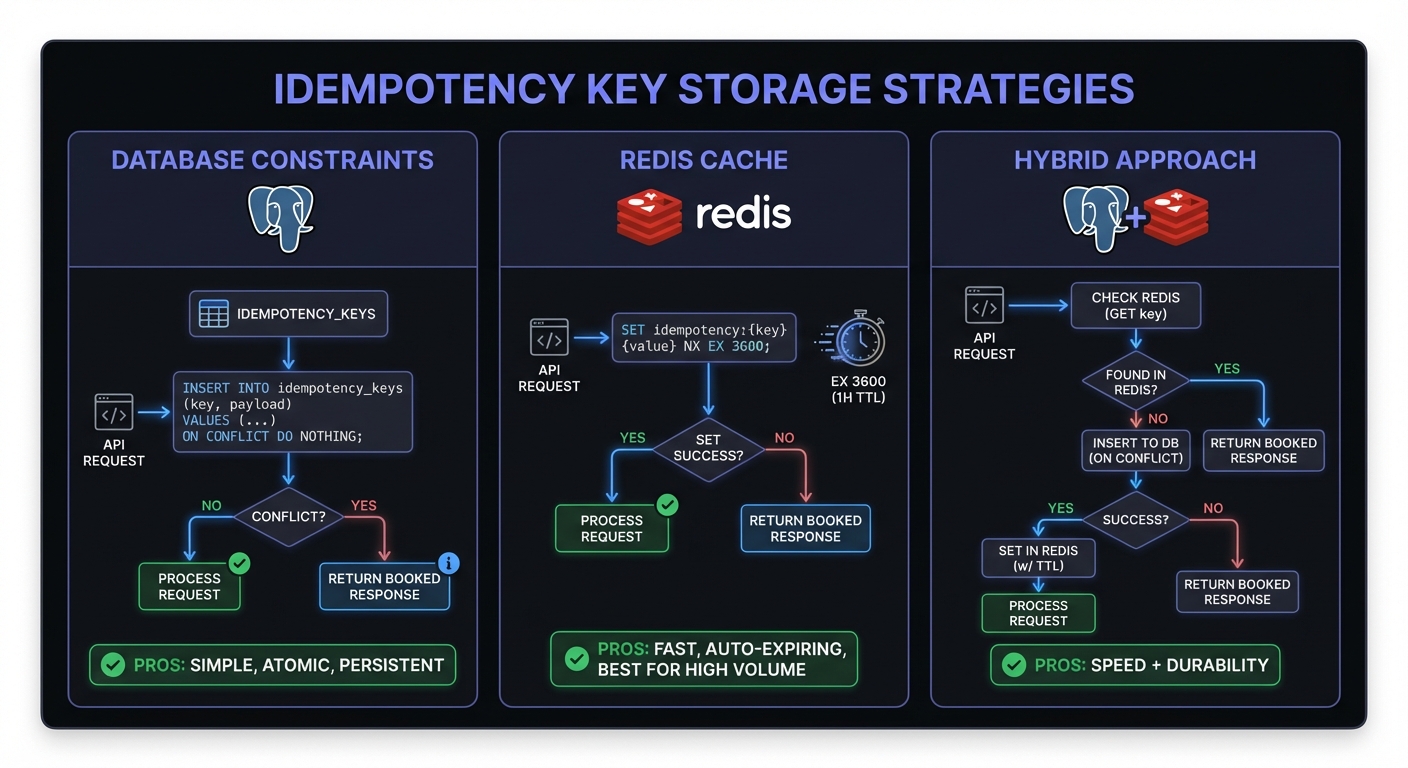

Deduplication Strategies

Strategy 1: Database Unique Constraints

The simplest approach uses database constraints to prevent duplicate records. This works well when webhook processing creates new records.

Node.js with PostgreSQL:

const { Pool } = require('pg');

const pool = new Pool();

async function processPaymentWebhook(event) {

const { id: eventId, data } = event;

const { transaction_id, amount, customer_id } = data;

try {

await pool.query(`

INSERT INTO processed_payments

(event_id, transaction_id, amount, customer_id, processed_at)

VALUES ($1, $2, $3, $4, NOW())

ON CONFLICT (event_id) DO NOTHING

`, [eventId, transaction_id, amount, customer_id]);

// Check if the insert actually happened

const result = await pool.query(

'SELECT * FROM processed_payments WHERE event_id = $1',

[eventId]

);

if (result.rows[0].processed_at < new Date(Date.now() - 1000)) {

console.log(`Event ${eventId} was already processed, skipping`);

return { duplicate: true };

}

// Continue with side effects (emails, fulfillment, etc.)

await fulfillOrder(transaction_id);

return { duplicate: false };

} catch (error) {

if (error.code === '23505') { // Unique violation

console.log(`Duplicate event ${eventId} detected`);

return { duplicate: true };

}

throw error;

}

}Python with SQLAlchemy:

from sqlalchemy.exc import IntegrityError

from datetime import datetime

def process_payment_webhook(event):

event_id = event['id']

data = event['data']

try:

payment = ProcessedPayment(

event_id=event_id,

transaction_id=data['transaction_id'],

amount=data['amount'],

customer_id=data['customer_id'],

processed_at=datetime.utcnow()

)

db.session.add(payment)

db.session.commit()

# Successfully inserted, continue processing

fulfill_order(data['transaction_id'])

return {'duplicate': False}

except IntegrityError:

db.session.rollback()

print(f'Duplicate event {event_id} detected')

return {'duplicate': True}Strategy 2: Redis-Based Deduplication

For high-throughput systems, checking a database for every webhook adds latency. Redis provides faster lookups with automatic expiration.

Node.js with Redis:

const Redis = require('ioredis');

const redis = new Redis();

async function processWithRedisDedup(event, processingFn) {

const eventId = event.id;

const lockKey = `webhook:processed:${eventId}`;

const lockTTL = 86400; // 24 hours

// Try to set the key only if it doesn't exist (NX)

const acquired = await redis.set(lockKey, Date.now(), 'NX', 'EX', lockTTL);

if (!acquired) {

console.log(`Event ${eventId} already processed`);

return { duplicate: true };

}

try {

await processingFn(event);

return { duplicate: false };

} catch (error) {

// Processing failed, remove the key to allow retry

await redis.del(lockKey);

throw error;

}

}

// Usage

app.post('/webhooks', async (req, res) => {

const event = req.body;

const result = await processWithRedisDedup(event, async (evt) => {

await handlePaymentEvent(evt);

});

res.json({ processed: !result.duplicate });

});Python with Redis:

import redis

import time

redis_client = redis.Redis()

def process_with_redis_dedup(event, processing_fn):

event_id = event['id']

lock_key = f"webhook:processed:{event_id}"

lock_ttl = 86400 # 24 hours

# Try to acquire lock

acquired = redis_client.set(lock_key, time.time(), nx=True, ex=lock_ttl)

if not acquired:

print(f'Event {event_id} already processed')

return {'duplicate': True}

try:

processing_fn(event)

return {'duplicate': False}

except Exception as e:

# Processing failed, allow retry

redis_client.delete(lock_key)

raiseReal-World Scenarios

Payment Webhooks

Payment processing demands bulletproof idempotency. A duplicate charge damages customer trust and triggers chargebacks.

async function handlePaymentSucceeded(paymentIntent) {

const paymentId = paymentIntent.id;

// Use transaction to ensure atomicity

await db.transaction(async (trx) => {

// Check if already processed

const existing = await trx('payments')

.where('stripe_payment_id', paymentId)

.first();

if (existing) {

console.log(`Payment ${paymentId} already recorded`);

return;

}

// Record the payment

await trx('payments').insert({

stripe_payment_id: paymentId,

amount: paymentIntent.amount,

customer_id: paymentIntent.customer,

status: 'completed',

created_at: new Date()

});

// Update account balance (idempotent because we checked first)

await trx('accounts')

.where('stripe_customer_id', paymentIntent.customer)

.increment('balance', paymentIntent.amount);

});

// Side effects outside transaction

await sendReceiptEmail(paymentIntent);

}Order Processing

Order webhooks often trigger fulfillment, inventory updates, and notifications. Each must be idempotent.

def handle_order_created(order_data):

order_id = order_data['id']

# Idempotent order creation

order, created = Order.objects.get_or_create(

external_id=order_id,

defaults={

'customer_id': order_data['customer_id'],

'total': order_data['total'],

'status': 'pending'

}

)

if not created:

print(f'Order {order_id} already exists')

return

# Reserve inventory (check current state, not just decrement)

for item in order_data['items']:

Inventory.objects.filter(

sku=item['sku'],

reserved_for_order__isnull=True

).update(reserved_for_order=order.id)

# Queue fulfillment (worker checks order status before processing)

fulfillment_queue.enqueue(order.id)User Creation

Duplicate user webhooks can create multiple accounts or send redundant welcome emails.

async function handleUserCreated(userData) {

const externalId = userData.id;

// Upsert pattern - idempotent by design

const user = await db.users.upsert({

where: { externalId },

create: {

externalId,

email: userData.email,

name: userData.name,

createdAt: new Date(),

welcomeEmailSent: false

},

update: {} // Don't update if exists

});

// Only send welcome email once

if (!user.welcomeEmailSent) {

await sendWelcomeEmail(user);

await db.users.update({

where: { id: user.id },

data: { welcomeEmailSent: true }

});

}

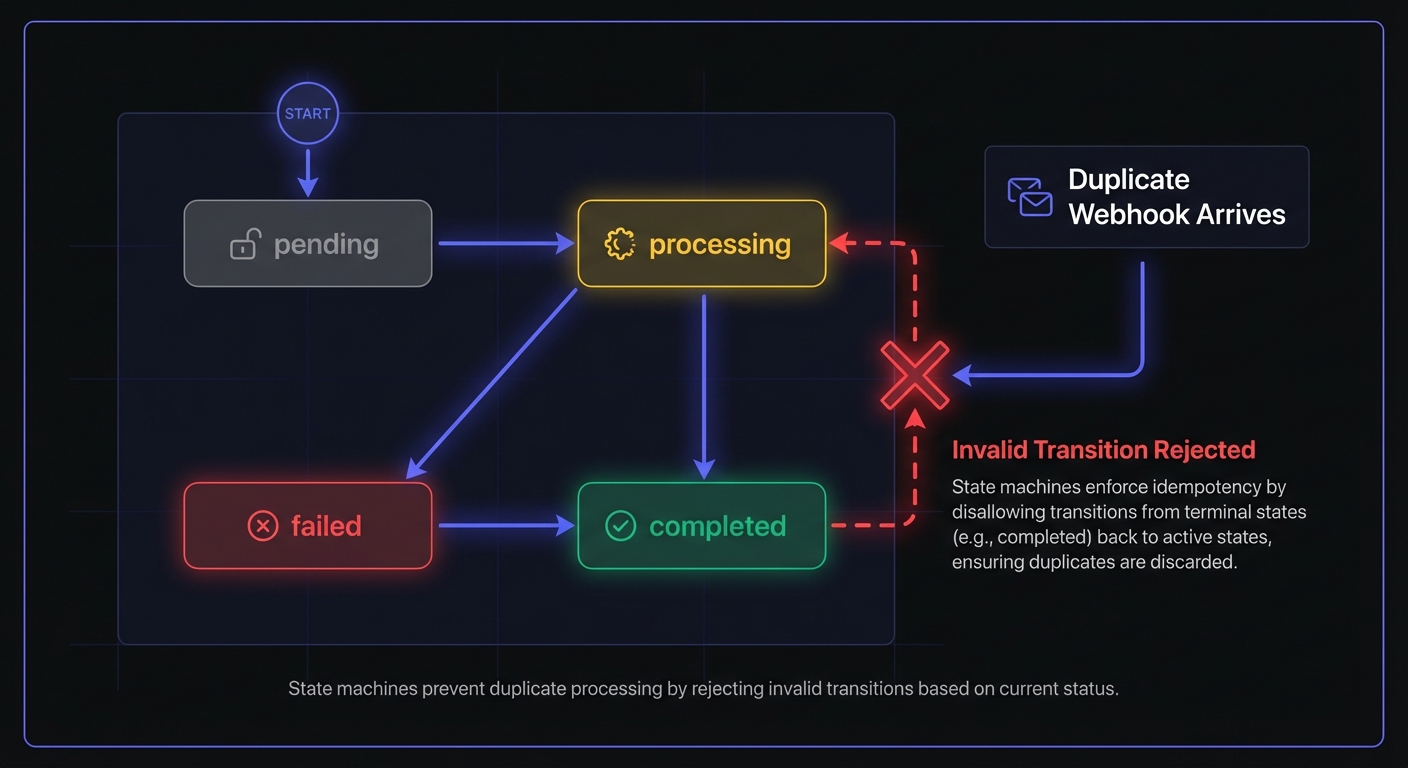

}State Machines for Idempotent Transitions

Simple deduplication checks whether you've seen an event. State machines go further—they validate whether a transition is valid given current state.

This matters for webhooks that arrive out of order. A payment.refunded webhook might arrive before payment.completed due to network conditions. Without state validation, you could process a refund for an incomplete payment.

const VALID_TRANSITIONS = {

pending: ['processing', 'cancelled'],

processing: ['completed', 'failed'],

completed: ['refunded'],

failed: ['processing'], // Allow retry

refunded: [], // Terminal state

cancelled: [] // Terminal state

};

async function processWithStateMachine(event) {

const { orderId, newStatus } = event.data;

return await db.transaction(async (trx) => {

const order = await trx('orders')

.where('id', orderId)

.forUpdate() // Lock row

.first();

if (!order) {

throw new Error(`Order ${orderId} not found`);

}

const validNextStates = VALID_TRANSITIONS[order.status] || [];

if (!validNextStates.includes(newStatus)) {

console.log(`Invalid transition: ${order.status} -> ${newStatus}`);

return { skipped: true, reason: 'invalid_transition' };

}

await trx('orders')

.where('id', orderId)

.update({ status: newStatus, updated_at: new Date() });

return { skipped: false };

});

}State machines make your webhook handler resilient to:

- Out-of-order delivery

- Duplicate events with different timestamps

- Race conditions from concurrent webhook deliveries

Async Processing: Queue First, Process Later

Synchronous webhook processing causes timeouts, which trigger retries, which create duplicates. Break this cycle by separating ingestion from processing.

// Webhook ingestion - fast, minimal processing

app.post('/webhooks', async (req, res) => {

const event = req.body;

const signature = req.headers['x-webhook-signature'];

// Verify signature (fast)

if (!verifySignature(event, signature)) {

return res.status(401).json({ error: 'Invalid signature' });

}

// Persist immediately (fast)

await db('webhook_events').insert({

event_id: event.id,

event_type: event.type,

payload: JSON.stringify(event),

status: 'pending',

received_at: new Date()

});

// Queue for processing (fast)

await queue.add('process-webhook', { eventId: event.id });

// Return 200 immediately (within 100ms)

res.status(200).json({ received: true });

});

// Background processor - handles idempotency

queue.process('process-webhook', async (job) => {

const { eventId } = job.data;

const event = await db('webhook_events')

.where('event_id', eventId)

.first();

if (event.status !== 'pending') {

return; // Already processed or processing

}

// Mark as processing (atomic update)

const updated = await db('webhook_events')

.where('event_id', eventId)

.where('status', 'pending')

.update({ status: 'processing' });

if (updated === 0) {

return; // Another worker grabbed it

}

try {

await handleWebhookEvent(JSON.parse(event.payload));

await db('webhook_events')

.where('event_id', eventId)

.update({ status: 'completed' });

} catch (error) {

await db('webhook_events')

.where('event_id', eventId)

.update({ status: 'failed', error: error.message });

throw error; // Let queue retry

}

});This pattern provides:

- Fast acknowledgment: Return 200 before processing, avoiding provider retries

- Built-in deduplication: Database status prevents double-processing

- Automatic retries: Queue handles failures without webhook duplication

- Visibility: Track webhook status for debugging

Reconciliation Jobs: Your Safety Net

Webhooks operate on "at-least-once" delivery—but what about "at-least-zero"? Network partitions, provider outages, and misconfigured endpoints can cause webhooks to never arrive.

Reconciliation jobs periodically fetch data from the source system to catch anything webhooks missed:

from datetime import datetime, timedelta

def reconcile_orders():

"""

Run hourly to catch any orders missed by webhooks.

"""

# Find orders we might have missed

one_hour_ago = datetime.utcnow() - timedelta(hours=1)

# Fetch recent orders from provider API

provider_orders = stripe.Order.list(

created={'gte': int(one_hour_ago.timestamp())},

limit=100

)

for provider_order in provider_orders.data:

# Check if we have this order

local_order = Order.objects.filter(

external_id=provider_order.id

).first()

if not local_order:

# Webhook missed - create order now

print(f'Reconciliation: Creating missed order {provider_order.id}')

handle_order_created({

'id': provider_order.id,

'customer_id': provider_order.customer,

'total': provider_order.amount,

'items': provider_order.items

})

elif local_order.status != provider_order.status:

# Status mismatch - update

print(f'Reconciliation: Updating order {provider_order.id} status')

local_order.status = provider_order.status

local_order.save()

return {'reconciled': len(provider_orders.data)}Reconciliation is your insurance policy. It handles:

- Webhooks lost during provider outages

- Events missed during your system's downtime

- Edge cases where webhook signatures fail validation

- Data drift from any cause

Run reconciliation jobs at least hourly for critical data. Your webhook handlers do the heavy lifting; reconciliation catches the edge cases.

Common Mistakes and How to Avoid Them

Mistake 1: Checking After Side Effects

// WRONG: Side effect happens before duplicate check

async function handleWebhook(event) {

await sendNotificationEmail(event.user); // Oops, already sent!

const existing = await db.events.findOne({ id: event.id });

if (existing) return;

await db.events.create({ id: event.id });

}Fix: Always check for duplicates before any side effects.

Mistake 2: Non-Atomic Operations

// WRONG: Race condition between check and insert

async function handleWebhook(event) {

const existing = await db.events.findOne({ id: event.id });

if (existing) return;

// Another request could insert here!

await db.events.create({ id: event.id });

}Fix: Use database transactions, unique constraints, or atomic Redis operations.

Mistake 3: Short TTLs on Deduplication Keys

If your Redis TTL is 1 hour but webhooks can be retried for 72 hours, late retries will be processed as new events. This is especially problematic when webhooks end up in a dead letter queue and are replayed much later.

Fix: Set TTLs longer than the maximum retry window of your webhook providers.

Mistake 4: Forgetting Side Effect Idempotency

Even with event deduplication, your side effects must be idempotent. Sending emails, updating inventory, and calling external APIs all need their own idempotency guarantees.

Fix: Track completion of each side effect separately, or use idempotent API calls where available.

Conclusion

Webhook idempotency is fundamental—not optional. At-least-once delivery means duplicates are inevitable, and your code must handle them. This connects to webhook ordering guarantees—both handle distributed system realities.

A complete idempotency strategy combines multiple layers:

- Deduplication: Track processed event IDs with database constraints or Redis

- State machines: Validate transitions to handle out-of-order delivery

- Async processing: Queue webhooks immediately, process in background

- Reconciliation: Periodically sync with source systems to catch missed events

Test by deliberately sending duplicates. Send the same webhook 10 times rapidly and verify your system handles it correctly.

Invest in idempotency today to prevent bugs, data inconsistencies, and late-night debugging tomorrow. Build it right and integrations scale reliably. See webhook reliability engineering for comprehensive patterns, or explore webhook retry strategies to understand the delivery side of the equation.

Related Posts

Webhook Retry Strategies: Linear vs Exponential Backoff

A technical deep-dive into webhook retry strategies, comparing linear and exponential backoff approaches, with code examples and best practices for building reliable webhook delivery systems.

Why You Shouldn't Rely on Webhook Ordering

A deep technical analysis of why webhook ordering guarantees are nearly impossible in distributed systems, and practical patterns for building systems that handle out-of-order delivery gracefully.

Webhook Dead Letter Queues: Complete Technical Guide

Learn how to implement dead letter queues (DLQ) for handling permanently failed webhook deliveries. Covers queue setup, failure criteria, alerting, and best practices for webhook reliability.

How to Receive Stripe Webhooks Reliably

A comprehensive guide to setting up, verifying, and handling Stripe webhooks in production. Learn best practices for idempotency, event ordering, and building reliable webhook endpoints with Node.js and Python examples.

Webhook Reliability Engineering: The Complete Guide

Master the art of building reliable webhook infrastructure. Learn retry strategies, circuit breakers, rate limiting, failure handling, and observability patterns used by engineering teams at scale.