Webhook Circuit Breakers: Protect Your Infrastructure

Learn how to implement the circuit breaker pattern for webhook delivery to prevent cascading failures, handle failing endpoints gracefully, and protect your infrastructure from retry storms.

Circuit Breakers for Webhooks: Protecting Your Infrastructure

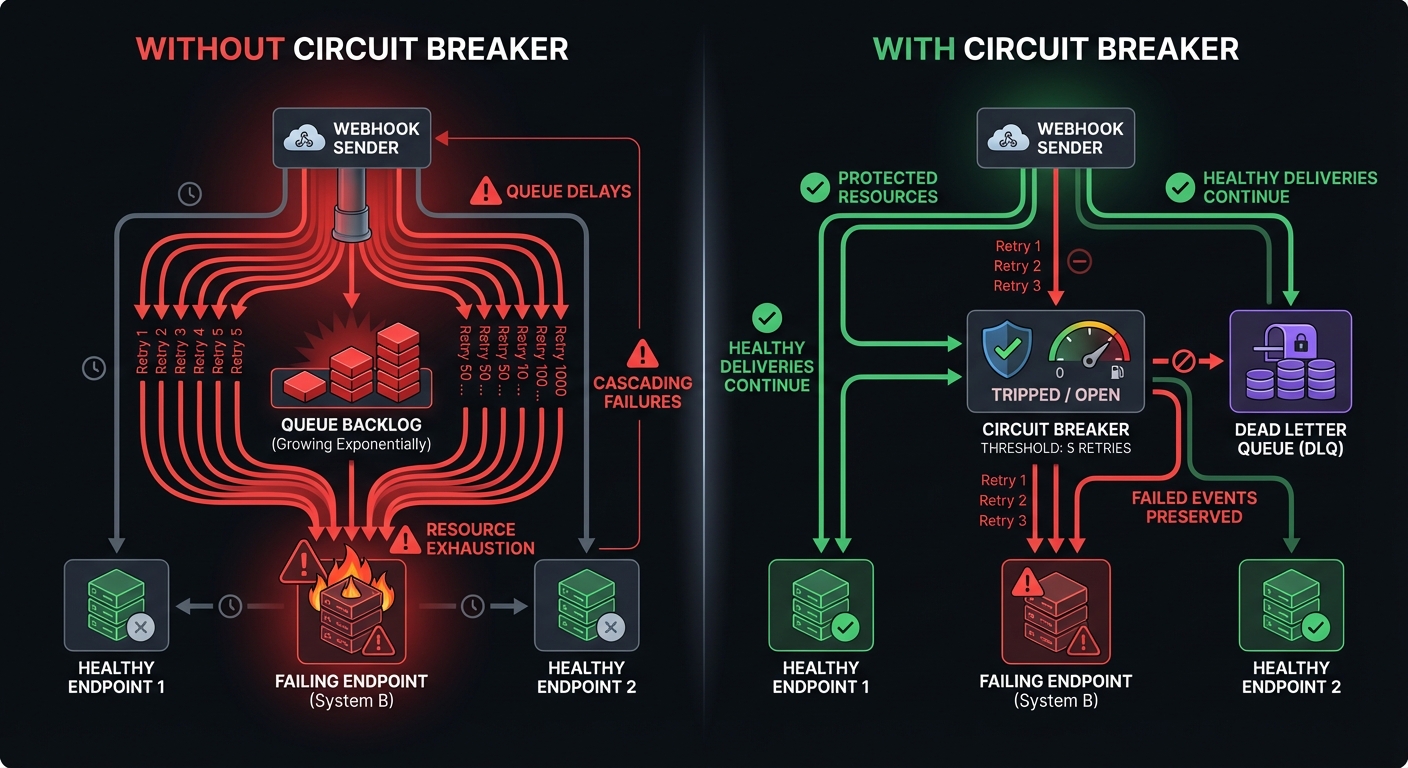

Without safeguards, a single failing endpoint triggers cascading retries that overwhelm your servers, delay healthy deliveries, and waste resources. The circuit breaker pattern solves this elegantly—essential for resilient webhook infrastructure.

What Is a Circuit Breaker?

The circuit breaker pattern, borrowed from electrical engineering, prevents repeated attempts on likely-to-fail operations. When failure rates cross a threshold, the breaker "opens" to stop requests, giving the service time to recover while protecting infrastructure from wasted resources.

For webhooks, a circuit breaker monitors delivery attempts per endpoint. When failures mount, it opens to halt requests, then later attempts recovery with test probes.

Why You Need Circuit Breakers

Retry storms: When a customer's endpoint fails and receives hundreds of webhooks/hour, thousands of retries accumulate, delaying healthy endpoints.

Cascading failures: A timeout that locks workers slows the entire pipeline. Healthy endpoints wait minutes instead of milliseconds.

Resource exhaustion: Retries to doomed endpoints waste CPU, memory, network, and database operations without delivering value.

Zombie endpoints: Dead endpoints that fail continuously clog your delivery queue, create back pressure, and delay events to legitimate endpoints. Circuit breakers detect and isolate these zombies automatically.

Circuit Breaker States

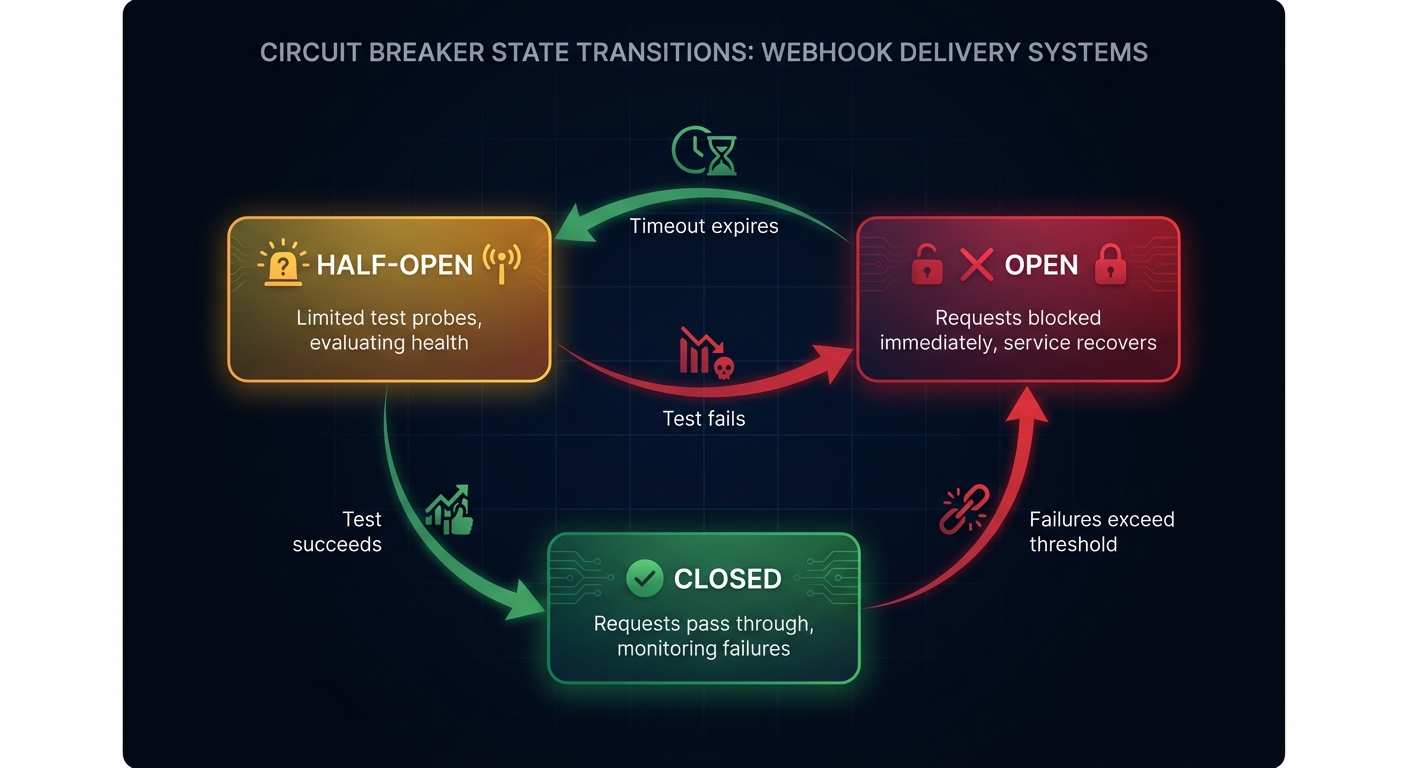

A circuit breaker operates in three states:

Closed: Default state. All requests pass through normally. The breaker monitors successes and failures within a rolling time window.

Open: When failures exceed threshold, the breaker opens. Requests are rejected immediately without contacting the endpoint, giving it time to recover. Failed webhooks route to a dead letter queue for later replay.

Half-Open: After a cooldown timeout, the breaker allows limited test probes. If they succeed, return to closed. If they fail, reopen and wait longer.

State Transition Logic

The key transitions:

- Closed to Open: Failure count exceeds threshold OR error rate exceeds percentage within sample window

- Open to Half-Open: Cooldown period expires (typically 30-60 seconds)

- Half-Open to Closed: Consecutive successful probes (typically 3-5)

- Half-Open to Open: Any probe failure (resets cooldown timer)

Implementing Circuit Breaker Logic

Here's a practical implementation of a webhook circuit breaker:

interface CircuitBreakerConfig {

failureThreshold: number; // Failures before opening

successThreshold: number; // Successes to close from half-open

timeout: number; // Ms before trying half-open

errorRateThreshold: number; // Percentage (0-100)

minimumRequests: number; // Min requests before rate calculation

sampleWindow: number; // Rolling window in ms (e.g., 10000)

}

class WebhookCircuitBreaker {

private state: 'closed' | 'open' | 'half-open' = 'closed';

private failures: number = 0;

private successes: number = 0;

private lastFailureTime: number = 0;

private halfOpenSuccesses: number = 0;

constructor(

private endpointId: string,

private config: CircuitBreakerConfig

) {}

async execute(deliveryFn: () => Promise<void>): Promise<void> {

if (!this.canExecute()) {

throw new CircuitOpenError(this.endpointId, this.getResetTime());

}

try {

await deliveryFn();

this.onSuccess();

} catch (error) {

this.onFailure();

throw error;

}

}

private canExecute(): boolean {

switch (this.state) {

case 'closed':

return true;

case 'open':

if (Date.now() - this.lastFailureTime >= this.config.timeout) {

this.transitionTo('half-open');

return true;

}

return false;

case 'half-open':

return true;

}

}

private onSuccess(): void {

if (this.state === 'half-open') {

this.halfOpenSuccesses++;

if (this.halfOpenSuccesses >= this.config.successThreshold) {

this.transitionTo('closed');

}

} else {

this.successes++;

this.failures = Math.max(0, this.failures - 1);

}

}

private onFailure(): void {

this.failures++;

this.lastFailureTime = Date.now();

if (this.state === 'half-open') {

this.transitionTo('open');

} else if (this.shouldTrip()) {

this.transitionTo('open');

}

}

private shouldTrip(): boolean {

if (this.failures >= this.config.failureThreshold) {

return true;

}

const total = this.successes + this.failures;

if (total >= this.config.minimumRequests) {

const errorRate = (this.failures / total) * 100;

return errorRate >= this.config.errorRateThreshold;

}

return false;

}

private transitionTo(newState: 'closed' | 'open' | 'half-open'): void {

this.state = newState;

if (newState === 'closed') {

this.failures = 0;

this.successes = 0;

this.halfOpenSuccesses = 0;

} else if (newState === 'half-open') {

this.halfOpenSuccesses = 0;

}

}

}When to Trip the Circuit Breaker

Balance sensitivity against stability. Trip too eagerly and transient issues disrupt; trip slowly and infrastructure suffers.

Consecutive failures: Trip after N failures (works well for rarely-failing endpoints):

failureThreshold: 5 // Trip after 5 consecutive failuresError rate thresholds: For high-volume endpoints, percentage-based triggers work better (1,000 webhooks/min might occasionally fail):

errorRateThreshold: 50 // Trip when 50% fail

minimumRequests: 20 // Calculate rate after 20 requests

sampleWindow: 10000 // Rolling 10-second windowTimeout handling: Weight timeouts heavily—they consume more resources than immediate failures:

const weight = isTimeout ? 3 : 1;

this.failures += weight;HTTP Status Code Differentiation

Not all failures are equal. Differentiate between:

- 5xx errors: Server failures—trip the breaker

- 429 Too Many Requests: Throttling signal—honor Retry-After header, don't trip immediately

- 401/403: Authentication failures—may require user intervention, not automatic retry

- Timeouts: Most expensive—weight heavily in failure calculations

function categorizeFailure(status: number, isTimeout: boolean): FailureWeight {

if (isTimeout) return { weight: 3, shouldTrip: true };

if (status === 429) return { weight: 0, shouldTrip: false, backoff: true };

if (status >= 500) return { weight: 1, shouldTrip: true };

if (status === 401 || status === 403) return { weight: 0, shouldTrip: false };

return { weight: 1, shouldTrip: true };

}Distributed Circuit Breakers

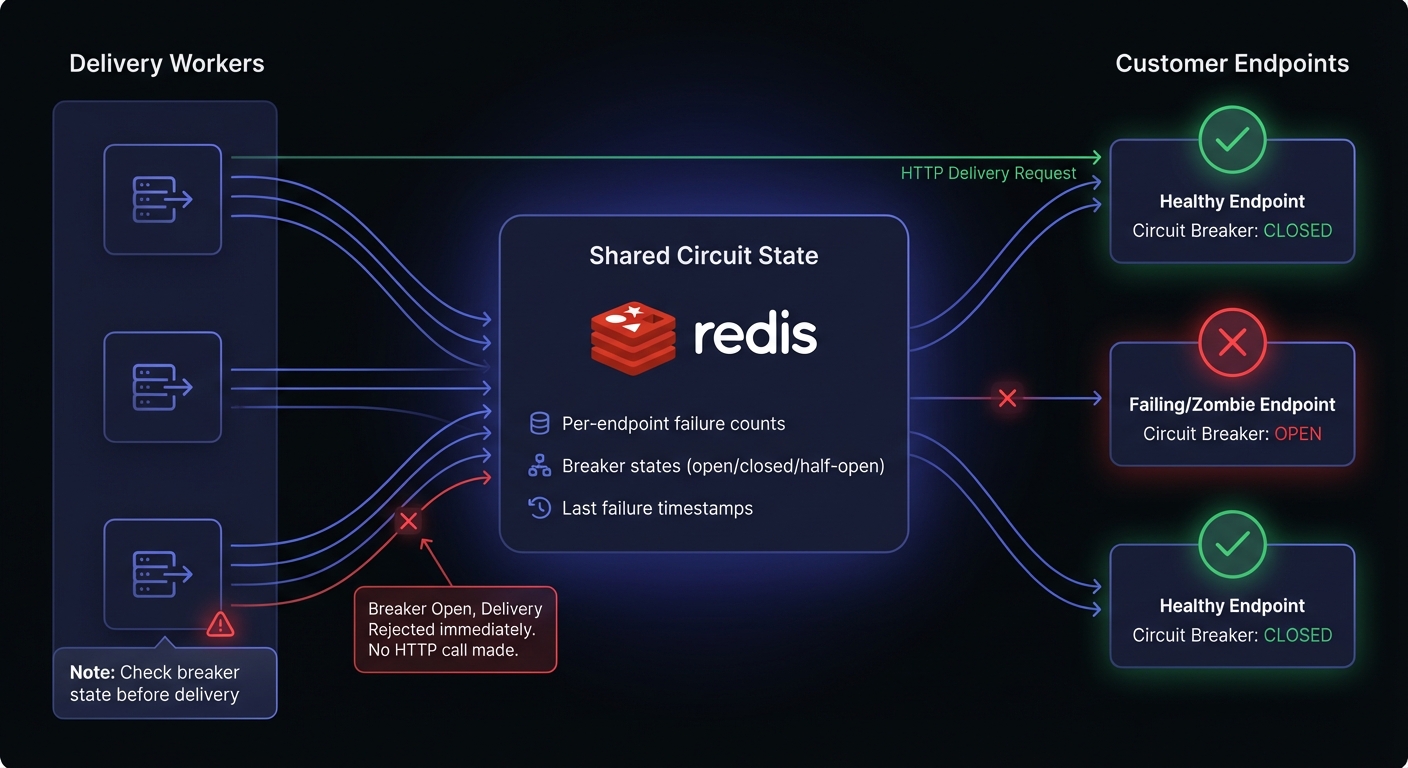

In production, multiple delivery workers process webhooks concurrently. Each worker needs consistent visibility into breaker state—a per-worker breaker allows failures to leak through other workers.

Shared State with Redis

Store breaker state in Redis for cross-worker coordination:

interface DistributedBreakerState {

endpointId: string;

state: 'closed' | 'open' | 'half-open';

failureCount: number;

successCount: number;

lastFailureTime: number;

windowStart: number;

}

class DistributedCircuitBreaker {

constructor(

private redis: Redis,

private config: CircuitBreakerConfig

) {}

async canDeliver(endpointId: string): Promise<boolean> {

const state = await this.getState(endpointId);

if (state.state === 'closed') return true;

if (state.state === 'open') {

const elapsed = Date.now() - state.lastFailureTime;

if (elapsed >= this.config.timeout) {

await this.transitionTo(endpointId, 'half-open');

return true;

}

return false;

}

// half-open: allow limited probes

return true;

}

async recordResult(endpointId: string, success: boolean): Promise<void> {

const key = `circuit:${endpointId}`;

const multi = this.redis.multi();

if (success) {

multi.hincrby(key, 'successCount', 1);

} else {

multi.hincrby(key, 'failureCount', 1);

multi.hset(key, 'lastFailureTime', Date.now());

}

await multi.exec();

await this.evaluateState(endpointId);

}

}Leader Election for State Updates

For high-traffic systems, designate a leader to evaluate breaker state while workers only read:

// Use Redis SETNX for distributed locking

async function acquireLeaderLock(redis: Redis): Promise<boolean> {

const result = await redis.set(

'circuit-leader-lock',

process.pid,

'NX',

'EX',

30 // 30 second lease

);

return result === 'OK';

}

// Leader polls database for failure metrics and updates Redis state

async function leaderEvaluationLoop(redis: Redis, db: Database) {

while (await acquireLeaderLock(redis)) {

const endpoints = await db.query(`

SELECT endpoint_id,

COUNT(*) FILTER (WHERE success = false) as failures,

COUNT(*) as total

FROM delivery_attempts

WHERE created_at > NOW() - INTERVAL '10 seconds'

GROUP BY endpoint_id

`);

for (const ep of endpoints) {

const errorRate = ep.failures / ep.total;

if (errorRate > 0.5 && ep.total >= 20) {

await redis.hset(`circuit:${ep.endpoint_id}`, 'state', 'open');

}

}

await sleep(1000);

}

}Recovery Strategies

How your circuit breaker recovers matters as much as how it trips. Poor recovery logic can cause oscillation between open and closed states, creating unpredictable delivery behavior.

Gradual Recovery

Rather than immediately returning to full traffic after successful probes, gradually increase the load:

class GradualRecoveryBreaker extends WebhookCircuitBreaker {

private recoveryPercentage: number = 0;

protected canExecuteInHalfOpen(): boolean {

// Gradually allow more traffic through

return Math.random() * 100 < this.recoveryPercentage;

}

protected onHalfOpenSuccess(): void {

this.recoveryPercentage = Math.min(100, this.recoveryPercentage + 10);

if (this.recoveryPercentage >= 100) {

this.transitionTo('closed');

}

}

}Health Check Probes

Instead of using real webhook deliveries as probes, implement dedicated health checks. This prevents customer webhooks from being lost during recovery testing:

async function probeEndpointHealth(endpoint: Endpoint): Promise<boolean> {

try {

const response = await fetch(endpoint.healthCheckUrl || endpoint.url, {

method: 'HEAD',

timeout: 5000,

});

return response.ok;

} catch {

return false;

}

}Manual Reset

Sometimes automated recovery isn't appropriate. Provide operators with manual control for situations requiring human judgment:

class ManualResetBreaker extends WebhookCircuitBreaker {

private manuallyOpened: boolean = false;

manualOpen(reason: string): void {

this.manuallyOpened = true;

this.transitionTo('open');

this.logManualAction('open', reason);

}

manualClose(reason: string): void {

this.manuallyOpened = false;

this.transitionTo('closed');

this.logManualAction('close', reason);

}

protected canExecute(): boolean {

if (this.manuallyOpened) return false;

return super.canExecute();

}

}Combining Circuit Breakers with Retries

Circuit breakers and retry strategies work together. Retries handle transient failures; circuit breakers prevent retries from overwhelming failing endpoints.

async function deliverWithResilience(

webhook: Webhook,

breaker: CircuitBreaker

): Promise<DeliveryResult> {

// Check circuit breaker first

if (!await breaker.canDeliver(webhook.endpointId)) {

// Route to DLQ instead of retrying

await deadLetterQueue.add(webhook);

return { status: 'circuit_open', queued: true };

}

try {

const result = await deliverWithRetry(webhook, {

maxRetries: 3,

backoff: 'exponential',

maxDelay: 10000

});

await breaker.recordSuccess(webhook.endpointId);

return result;

} catch (error) {

await breaker.recordFailure(webhook.endpointId);

// After retries exhausted AND breaker trips, route to DLQ

if (await breaker.isOpen(webhook.endpointId)) {

await deadLetterQueue.add(webhook);

}

throw error;

}

}Monitoring and Alerting

Circuit breakers generate valuable operational signals. Emit events on state transitions for dashboards and alerts.

interface CircuitBreakerEvent {

type: 'trip' | 'reset' | 'half_open';

endpointId: string;

timestamp: Date;

failureCount?: number;

errorRate?: number;

reason?: string;

}

function emitBreakerEvent(event: CircuitBreakerEvent): void {

// Log for debugging

logger.info('Circuit breaker state change', event);

// Emit metric for dashboards

metrics.increment('circuit_breaker.transitions', {

type: event.type,

endpoint: event.endpointId

});

// Alert on trip (potential customer issue)

if (event.type === 'trip') {

alerting.notify({

severity: 'warning',

message: `Circuit breaker opened for endpoint ${event.endpointId}`,

details: event

});

}

}See webhook observability for visibility into breaker states and building operational dashboards.

Multi-Tenant Considerations

For SaaS webhook platforms serving multiple customers, implement per-tenant circuit breakers:

// Each customer endpoint gets its own breaker

const breakerKey = `circuit:${tenantId}:${endpointId}`;

// Aggregate metrics per tenant for dashboards

const tenantHealth = await redis.hgetall(`tenant:${tenantId}:health`);

// { total_endpoints: 50, healthy: 48, degraded: 2, failed: 0 }This prevents one customer's failing endpoint from affecting other customers. A failing endpoint for Customer A trips only that endpoint's breaker, while Customer B's webhooks continue normally.

Endpoint Health Tracking

Circuit breakers need persistent state across delivery infrastructure. Store breaker state in a shared data store (Redis):

interface EndpointHealth {

endpointId: string;

state: 'closed' | 'open' | 'half-open';

failureCount: number;

lastFailure: Date | null;

consecutiveSuccesses: number;

errorRatePercent: number;

}

// Store in Redis for fast access

await redis.hset(`circuit:${health.endpointId}`, health);

// Set TTL to auto-cleanup stale breakers

await redis.expire(`circuit:${health.endpointId}`, 86400);Conclusion

Circuit breakers transform webhook delivery from fragile to resilient infrastructure. Combined with retry strategies, rate limiting, and dead letter queues, they form a pillar of webhook reliability.

Key implementation decisions:

- Threshold tuning: Balance between catching failures fast and avoiding false positives

- Distributed state: Use Redis for cross-worker coordination

- Recovery strategy: Gradual recovery prevents re-tripping on fragile endpoints

- Monitoring: Emit events for operational visibility

Whether you build your own or use a managed solution like Hook Mesh, this pattern is essential for production webhook systems handling any meaningful volume.

Related Posts

Webhook Retry Strategies: Linear vs Exponential Backoff

A technical deep-dive into webhook retry strategies, comparing linear and exponential backoff approaches, with code examples and best practices for building reliable webhook delivery systems.

Webhook Rate Limiting: Strategies for Senders and Receivers

A comprehensive technical guide to webhook rate limiting covering both sender and receiver perspectives, including implementation strategies, code examples, and best practices for handling high-volume event delivery.

Webhook Dead Letter Queues: Complete Technical Guide

Learn how to implement dead letter queues (DLQ) for handling permanently failed webhook deliveries. Covers queue setup, failure criteria, alerting, and best practices for webhook reliability.

Webhook Observability: Logging, Metrics, and Tracing

A comprehensive technical guide to implementing observability for webhook systems. Learn about structured logging, key metrics to track, distributed tracing with OpenTelemetry, and alerting best practices.

From 0 to 10K Webhooks: Scaling Your First Implementation

A practical guide for startups on how to scale webhooks from your first implementation to handling 10,000+ events per hour. Learn what breaks at each growth phase and how to fix it before your customers notice.